Hash keys can be used instead of sequence numbers to build surrogate keys in a Data Warehouse. This is often done in Data Vault environments, and there are some good reasons to do that. But there are also several issues and restrictions with hash keys. So, use hash keys only if you have good reasons for them. And if you decide to use hash keys in your Oracle Data Warehouse, make sure that you do it the right way.

A hash function is a deterministic function that distributes a (usually high) number of input values to a set of distinct hash values. Hash algorithms are used for different purposes in computer science (e.g. compilers and parsers, in cryptography or for key generators). In the Oracle database, hash functions are used for hash joins, hash partitioning and hash clusters – to name just a few examples.

To illustrate the principle of a hash function, I use the expression MOD(n, 7) as a hash function in the following example. The function is deterministic, i.e. for the same input value, the result is always the same. The result returns the remainder of a division by 7, therefore always a number between 0 and 6.

Basic principle of a hash function

As you can see in the example above, the hash values are not unique. For the input values 15 and 22, the hash key (1) is the same. That is called a hash collision. For many situations, this is not an issue. If a hash algorithm is used for equal distribution of rows to multiple hash partitions, it is clear that more than one row is stored in each partition. But for a hash key generator, hash collisions have to be avoided.

In this blog post, I will write about a special use case of hash functions: Building hash keys in a Data Warehouse to replace the business key with a surrogate primary key. In this case, the business key (one or more attributes of a source table) is the input value. The result of the hash function is a hash key. Hash keys are often used in Data Warehouses with Data Vault models, they are even one of the main concepts of Data Vault 2.0. But hash keys can also be used in dimensional models as primary keys of the dimension tables, in historized head & version models, or in any 3NF data model.

Sequence Numbers or Hash Keys?

There are many discussions about the right approach to build surrogate keys. Should I use sequence numbers or hash keys? Or even UUIDs? As usual in real life, there are advantages and disadvantages for each of the concepts. Unfortunately, there is an almost religious “key discussion” in the Data Vault community. What is my opinion? I don’t want to be a “good guy” or a “bad guy”, depending on whether I use sequence numbers or hash keys. For me as a Data Warehouse consultant, it is important to understand the principle of the different concepts, their advantages and disadvantages. Then I can decide for each project which approach fits best for the specific requirements and technology. Here just a short summary of the two approaches:

Sequence Numbers: For every new business key in a Hub, a new sequence number is created. In Oracle, this can be implemented classically with a sequence, or in Oracle 12c with an identity column. To determine the foreign keys on Links or Satellites, a key lookup on the Hub is needed. For each business key, the corresponding surrogate key is derived from the Hub. Key lookup operations are very common in Data Warehouses and are available in every ETL tool. In SQL, a key lookup is usually just a (left outer) join on the lookup table (here on the Hub) to determine the correct sequence number.

Sequence numbers with key lookup

The main issue of this approach is the load dependency: All Hubs must be loaded before the Links and Satellites to guarantee that all key lookups return a result. Additionally, the sequence numbers in different environments (e.g. development, test and production database) are not in sync. If data has to be compared or moved from one environment to another, the sequence numbers must be recalculated. The third challenge is the reload of data from scratch: If – for any reason – a table is truncated and initially loaded, each row will get a new sequence number, so the dependent tables must be reloaded as well.

Hash Keys: If a hash algorithm is used to determine the surrogate keys, the hash key can be derived from the business key directly, without a key lookup. Because a hash function is deterministic, it will always return the same hash key for the same business key: On the Hub as well as on the referring Links and Satellites. Therefore, there is no special load order required, all tables can (theoretically) be loaded in parallel. And because of the deterministic function, the hash keys would be the same in multiple environments and after a reload of the same data.

Hash keys calculated with hash function

The simultaneous load of all Hubs, Links and Satellites is not always possible. If you have foreign key constraints defined on your Data Vault tables, you still have to load the Hubs before the Links and Satellites. One option would be to omit the constraints (which I definitely not recommend). Reliable constraints can help here (see my blog post Foreign Key Constraints in an Oracle Data Warehouse). But if you don’t rely on the source data and want to prove data quality with constraints, you still need a predefined load order, even with hash keys.

Another issue of hash keys is the risk of hash collisions. Hence it is important to choose a suitable hash function. The probability of a hash collision with MD5 or SHA-1 algorithm (see below) is very small – but theoretically still exists. So, what happens if two business keys would result in the same hash key? In the best case, the load job fails with a primary key violation. In the worst case, data of two different business entities is mixed, e.g. sales orders of one customer are assigned to another customer. Sounds horrible, but in many Data Warehouses, this is not a real issue. The risk of wrong results because of bad source data quality or development bugs in the ETL jobs is higher than the chance of a hash collision.

The decision whether to use sequence numbers or hash keys depends on many aspects. If you have good reasons to use hash keys (or sequence numbers), then use them. But don’t do it just because “it is said to be used”.

The Wrong Way: ORA_HASH

A well-known hash function in Oracle is the SQL function ORA_HASH. This is a useful function to distribute data to multiple subsets, but the generated hash keys are far away from uniqueness. Many people are impressed by the maximum number of buckets (i.e. the number of possible return values) of this hash function. The default (and highest possible value) of the optional parameter MAX_BUCKET is 4294967295. That means, the hash function returns 32 bits – not very much. So, hash collisions will occur frequently, even with small data sets (see Connor McDonald’s blog post). The probability of two input values with the same hash key is quite high. With 9300 input rows, the probability is 1%, with 50’000 rows already 25%, and with 77’000 rows 50%.

The mathematical background for this behavior is the so-called Birthday Problem: What is the probability that two people have their birthday on the same date of the year, depending on the total number of people? Did you know, that with only 23 people, this probability is already 50%? A good explanation of the birthday problem can be found on Wikipedia. If you don’t like mathematics and prefer more practical exercises, run the following query on an Oracle database:

WITH input_data AS

(SELECT ROWNUM n, ORA_HASH(ROWNUM) hash

FROM dual CONNECT BY ROWNUM <= 1000000)

SELECT hash, COUNT(*)

FROM input_data

GROUP BY hash HAVING COUNT(*) > 1;

The result is 122 hash collisions for an input of 1 million rows. With 100’000 rows, there are already 3 collisions. Definitely not a good choice for a Data Warehouse! So, we need a better hash function.

Cryptographic Hash Functions in Oracle

Several common cryptographic hash algorithms are available that are suitable to generate (almost) unique hash keys with a very small probability of hash collisions. The most famous ones are MD5 (message-digest algorithm) as well as SHA-1 and SHA-2 (secure hash algorithm). SHA-2 consists of multiple variants with a different number of output bits. The higher the number of output bits, the smaller is the probability of a hash collision.

The Oracle database contains different implementations of cryptographic hash functions, but not all of them are available in every version. Here an overview of the different packages and functions.

DBMS_OBFUSCATION_TOOLKIT: This PL/SQL package contains some overloaded procedures and functions to generate MD5 hash keys. The package is deprecated since Oracle 11.2 and should not be used anymore. Because of the overloaded versions of the MD5 function, it is not possible to use the function directly in SQL. Instead, a PL/SQL function must be written to be called in SQL.

CREATE OR REPLACE

FUNCTION hash_key (v_input VARCHAR2)

RETURN dbms_obfuscation_toolkit.varchar2_checksum

AS

BEGIN

RETURN dbms_obfuscation_toolkit.md5(input_string => v_input);

END hash_key;

HASH_MD4 CONSTANT PLS_INTEGER := 1;

HASH_MD5 CONSTANT PLS_INTEGER := 2;

HASH_SH1 CONSTANT PLS_INTEGER := 3;

HASH_SH256 CONSTANT PLS_INTEGER := 4;

HASH_SH384 CONSTANT PLS_INTEGER := 5;

HASH_SH512 CONSTANT PLS_INTEGER := 6;

Unfortunately, the constants cannot be used directly in SQL. Instead, the corresponding numbers must be added as parameters, and an explicit type conversion to RAW, CLOB or BLOB must be included in the statement. So, it is still easier to define a wrapper function in PL/SQL:

CREATE OR REPLACE

FUNCTION hash_key (v_input VARCHAR2) RETURN RAW DETERMINISTIC

AS

PRAGMA UDF;

BEGIN

RETURN dbms_crypto.hash(utl_raw.cast_to_raw(v_input), dbms_crypto.HASH_MD5);

END hash_key;

STANDARD_HASH: This new SQL function was introduced with Oracle 12.1. It supports the same hash algorithms as DBMS_CRYPTO, but is easier to use in SQL – and much faster. The first parameter is an input value of any data type except LONG and LOB. The second (optional) parameter is a string that defines the hash algorithm. Default is ‘SHA1’, other possible values are ‘MD5’, ‘SHA256’, ‘SHA384’ and ‘SHA512’. The function can be used directly in SQL:

WITH input_data AS

(SELECT ROWNUM n, STANDARD_HASH(ROWNUM, 'MD5') hash

FROM dual CONNECT BY ROWNUM <= 1000000)

SELECT hash, COUNT(*)

FROM input_data

GROUP BY hash HAVING COUNT(*) > 1;

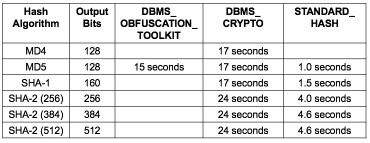

The statement generates 1 million hash keys without any hash collisions. This is also the case for all functions described above (except ORA_HASH). The different implementations of the Oracle cryptographic hash functions are all suitable to generate unique hash keys, but the performance is different. The query above was used to run all of the available hash algorithms of the PL/SQL packages and the SQL function. The following table compares the execution times.

SQL runtime to generate 1 million has keys

As you can see from the execution times, the SQL function STANDARD_HASH is much faster than DBMS_CRYPTO. So, if you decided to use hash keys in your Data Warehouse and you work with Oracle 12c, the best option is to use the function STANDARD_HASH. The default hash algorithm SHA-1 is the best compromise: 160 bits are enough to avoid hash collisions, but the performance is better than with the SHA-2 algorithms. DBMS_CRYPTO should only be used for backward compatibility, and DBMS_OBFUSCATION_TOOLKIT should not be used anymore at all.

Running the same code to produce an MD5 hash for a hard-coded string literal produces a very different hash value on Tibero 6 to the one on Oracle 11.2.0.1.0 (see below). Why?

<>

DECLARE

c_in PLS_INTEGER := 1;

c_out PLS_INTEGER := 1;

c_lang PLS_INTEGER := 0;

c_warn PLS_INTEGER := 0;

new_line CONSTANT VARCHAR2(2 CHAR) := CHR(13) || CHR(10);

test_data_b BLOB;

test_data_c CLOB := ‘This is a test value.’;

BEGIN

DBMS_LOB.CreateTemporary(test_data_b, true);

DBMS_LOB.converttoblob(test_data_b, test_data_c, LENGTH(test_data_c), c_in, c_out, DBMS_LOB.default_csid, c_lang, c_warn);

DBMS_OUTPUT.put_line(DBMS_CRYPTO.hash(test_data_b, DBMS_CRYPTO.hash_md5));

DBMS_OUTPUT.put_line(DBMS_CRYPTO.hash(test_data_b, DBMS_CRYPTO.hash_md5));

EXCEPTION

WHEN OTHERS THEN

DBMS_OUTPUT.put_line(‘—– Error —–‘

|| new_line || DBMS_UTILITY.format_error_stack()

|| new_line || ‘—– Back-Trace —–‘

|| new_line || DBMS_UTILITY.format_error_backtrace()

|| new_line || DBMS_UTILITY.format_call_stack());

END test_block;

LikeLike

Hi Michael

There can be multiple reasons for different hash values, for example upper/lower case, byte order (endianness) of the underlying operating system, different character sets. Dirk Lerner (https://tedamoh.com/about) is currently writing a blog post about the reasons for different MD5 values. As soon as the post is available, I (or Dirk) will provide the link here.

I did a quick check with your test case:

– Your PL/SQL block, executed on an Oracle 19c database, returns the hash value ‘694938CFA1398CCDB2204BEA55F6CBBD’

– The function standard_hash(‘This is a test value.’, ‘MD5’) in Oracle 19c returns ‘694938CFA1398CCDB2204BEA55F6CBBD’ (i.e. the same value)

– The function md5(‘This is a test value.’) in Spark SQL returns the hash value ‘694938cfa1398ccdb2204bea55f6cbbd’ (i.e. the same value, but in lower case)

– The function HashBytes(‘MD5’, ‘This is a test value.’) in SQL Server 2014 returns 0x694938CFA1398CCDB2204BEA55F6CBBD (i.e. the same value as in Oracle)

– For Tibero 6 I could not do any tests because I don’t know this database system

LikeLike

Many thanks for taking the time to respond, Dani. What you describe is very much in line with my own tests. I get the same value (694938CFA1398CCDB2204BEA55F6CBBD) on Oracle 11g but get a totally different one on Tibero 6 (FE1120D07EBDB936B08974B36DA41575).

I apologise, not only for the brevity of my original post, but also for assuming you would be familiar with Tibero (I had found your article as a result of a Google search specifically for Tibero pages). I realised it must be a standard algorithm but am not confident enough in my cryptography knowledge to be sure there wasn’t some server-dependent seeding mechanism that I might have been unaware of. Anyway, thanks again.

LikeLike

Hi

Is there a standard way or function of generating SHA3-512 Algorithm in Oracle?

Thanks

Padam

LikeLike

Hi Padam

No, as far as I know. Currently, STANDARD_HASH supports only different sizes of SHA-2, e.g. STANDARD_HASH(…, ‘SHA512’)

See Oracle documentation: https://docs.oracle.com/en/database/oracle/oracle-database/19/sqlrf/STANDARD_HASH.html

SHA-3 was introduced in 2012 and standardized in 2015, so I guess it takes a couple of years until all software vendors will support it.

Cheers, Dani

LikeLike

Thank You Dani for your quick turn around. Can you please suggest any alternatives? Via Java? Or other options. Thank You

LikeLike

Hi Padam

Is there really a need for SHA-3? I’m not a cryptographic specialist, but as far as I know, SHA-3 is useful if SHA-2 is not secure enough. If you use the hash function to build hash keys in the database, SHA-2 is definitely good enough. If you need it for password encryption or something like that, then SHA-3 is eventually more secure.

If you really need SHA-3, then an implementation with Java would be the only option I know.

Regards, Dani.

LikeLike

Thank You Dani, we have a requirement to integrate with another system for which the encoding has to be SHA3-512 and it cannot be SHA2, we will look for the Java options. Many thanks for your inputs so far.

Regards,

Padam

LikeLike

Hi Padam,

I could imagine that this is about the Hungarian invoicing :-). I found a solution using bouncycastle (https://www.bouncycastle.org/). Just download bcprov-ext-jdk15on-164.jar and bcprov-jdk15on-164.jar. Load them into the database using the loadjava-command (loadjava -u username/password@database -o -r -v bcprof-jdk15on-164.jar).

Create the Java Source:

create or replace and compile java source named my_crypto as

package my.crypto;

import org.bouncycastle.jcajce.provider.digest.SHA3;

import org.bouncycastle.util.encoders.Hex;

public class Crypto {

public static String getHashSHA3_512(String iHashText) {

SHA3.DigestSHA3 sha3512Digest = new SHA3.DigestSHA3(512);

sha3512Digest.update(iHashText.getBytes());

return Hex.toHexString(sha3512Digest.digest());

}

}

/

Then create the the PL/SQL-Function calling the java source:

CREATE OR REPLACE FUNCTION js_getHashSHA3_512

(i_text IN VARCHAR2

)

RETURN VARCHAR2

IS LANGUAGE JAVA NAME ‘my.crypto.Crypto.getHashSHA3_512(java.lang.String) return java.lang.String’

;

Ready to go using:

SELECT js_getHashSHA3_512(‘TextToHash’) FROM dual;

LikeLike

Hi Markusld,

Thank you for your response, And yes this is for HU Invoice reporting. Do you know how reliable is this third party software and use its libraries. We found this also on Google.

Regards,

Padam

LikeLike

Hi Padam,

As this is an Australian charity (https://en.wikipedia.org/wiki/Bouncy_Castle_(cryptography)) I suppose that it is a trustworthy, reliable source that is also used in wide range of products (it seems Oracle itself also uses it in MySQL – https://docs.oracle.com/cd/E17952_01/connector-net-license-com-en/license-bouncy-castle-1-7.html). I have used it before in an SFTP-implementation without problems.

However, what I forgot to mention, it might be necessary to replace the security-policy-files in the database’s security directory with files downloaded from Oracle (https://www.oracle.com/java/technologies/jce-6-download.html), because the standard java-implementation has restricted key lengths.

Best wishes

Markus

LikeLike

Excellent information.

I’m trying to use the standard_hash function in the Tableau v2019.4 custom sql. It doesn’t show the this column (though I had alias) Any one has idea about it?

LikeLike

STANDARD_HASH is an Oracle specific function and probably not available in Tableau custom sql. What you could to (if you running Tableau on an Oracle database): Create a view on Oracle that calls the function and returns it as an attribute.

LikeLike

Hi Dani

Thanks for the excellent article, I just wonder how can encrypt an xml doc with the STANDARD_HASH with Oracle PL/SQL developer.

Thanks in advance.

Seham.

LikeLike

Hi Seham

STANDARD_HASH does not support CLOB types, neither on XMLTYPE. In this case, you have to use DBMS_CRYPTO. A possible solution you can find here:

https://stackoverflow.com/questions/23237326/how-to-use-ora-hash-on-a-column-of-datatype-xmltype/23237770#23237770

Cheers, Dani.

LikeLike

Hi Dani

Thanks for the fast reply . Can we use it to take in varchar and then parse it to clob, and if so how can we do that as it’s faster than DBMS_crybto.

Thanks

Seham.

LikeLike

Hi ,

We have a requirement where we want to hide or encrypt or obfuscate some given fields like customer email , phone_no so that when one queries it in database it remain unreadable to him .

When application pulls the data from the database , the data should go unreadable to application layer and if the person is eligible to see that data , the application should make it readable .

So that data customer sensitive data columns should be unreadable in database , while getting pulled into application in transit and in application side , data should become readable only to the authorized person on the application .

We are using Oracle database 12c and java in frontend .What solution we can follow for this ?

We considered dbms_crypto but the encrypted values are overshooting the allowed database column limits and causing overflow error .

LikeLike

Hi Thomas

Have a look at Oracle Data Redaction (part of the Oracle Advanced Security option), that’s probably what you are looking for:

https://docs.oracle.com/en/database/oracle/oracle-database/19/asoag/introduction-to-oracle-data-redaction.html

LikeLike

Thank you Dani for reply,

The requirement is

1.customer data remains hidden from db developers/admin ,if developers/qa/ query select customer data, he should see it unreadable or obfuscated/hidden/encrypted

[dbms_redact can work here]

2.The database fields that need to be covered , we have list of them , they are static.

[dbms_redact can work here]

3.when application queries data , it travels hidden / encrypted /hidden.

[dbms_redact can work here]

4.At application if user is eligible to see that data , it should be shown in readable form to allow him to make changes. and it will again encrypted/hidden/obfuscated once user makes changes and submits and stored in the database.

In the case of updates from application to UI to database , suppose customer updates email from abc@gmail.com to def@gmail , the text def@gmail will go readable from application to database , does dbms redact comes with any additional feature to handle this scenario ?

regards

Thomas

LikeLike

Hi Thomas

For my point of view, all these requirements can be implemented with dbms_redact. But because your questions are not related to the original blog post above, I think this is the wrong place for further discussions here.

LikeLike

Hi, I was confused about your statement about not being able to run directly in SQL. I have been running it for years:

select

cast(DBMS_CRYPTO.hash

(UTL_RAW.cast_to_raw

(

‘Mike’ || ‘~’

|| ‘James’ || ‘~’

|| ‘John’ || ‘~’

|| ‘Ken’ || ‘~’

|| 123 || ‘~’

|| 456 || ‘~’

|| 321 || ‘~’ — for future archive

|| to_char(sysdate,’MMDDYYYY’) || ‘~’

),2) as varchar2(32)) link_key

,cast(DBMS_CRYPTO.hash

(UTL_RAW.cast_to_raw

(

‘Mike’ || ‘~’

|| ‘James’ || ‘~’

|| ‘John’ || ‘~’

|| ‘Ken’ || ‘~’

|| 123 || ‘~’

|| 456 || ‘~’

|| 321 || ‘~’ — for future archive

|| to_char(sysdate,’MMDDYYYY’) || ‘~’

),3) as varchar2(40)) link_key

,cast(DBMS_CRYPTO.hash

(UTL_RAW.cast_to_raw

(

‘Mike’ || ‘~’

|| ‘James’ || ‘~’

|| ‘John’ || ‘~’

|| ‘Ken’ || ‘~’

|| 123 || ‘~’

|| 456 || ‘~’

|| 321 || ‘~’ — for future archive

|| to_char(sysdate,’MMDDYYYY’) || ‘~’

),4) as varchar2(64)) link_key

FROM dual;

LikeLike

Hi Michael

I wrote: “…the constants cannot be used directly in SQL”. The function DBMS_CRYPTO.hash can be used, as you show in your example, But the second parameter cannot be one of the predefined constants. In your example, you use the number 2, 3 and 4 instead of DBMS_CRYPTO.HASH_MD5, etc.

LikeLike